Headjam’s Maya 2016 Render Farm

Headjam is getting more muscle for 3D animation and visual effects projects.

Autodesk Maya is the software we use when working in 3D at Headjam. With Maya we can create animations and special effects for film and advertising. To get the creative work from Maya’s virtual world to our audience, all of our work must first go through a process called rendering. Rendering is what happens when the animator completes his or her designing within Maya’s interface, and Maya then transforms the work into a viewable (hopefully) beautiful image. It’s hard to describe what’s happening, the main thing to understand is that the task is intensive and often takes hours if not days. Render time isn’t quantifiable. A five minute animation could take anywhere from 8 hours to 8 weeks to 80 million years depending on what goes into it.

Headjam have purchased four new render nodes (computer slaves) to accelerate render times and shorten video production timeframes. Using our network, our studio’s animation workstation controls the slaves. Maya splits up rendering tasks and distributes the workload amongst all slave machines on the network. This can scalably speed up the following tasks:

- IPR rendering with mental ray for Maya

- Rendering single frames in Maya

- batch rendering (a batch render started by Maya)

- command-line rendering

- Texture baking

With a budget around the $5000(AUD) mark it was important to get the most bang for our buck. Rather than building one beastly machine with the latest core heavy Intel Xeon processors, we decided, at this price point, building multiple smaller machines would yield better rendering performance. The Maya 2016 subscription comes with an extra four Mental Ray Satellite licences, meaning we would be limited to procuring a maximum of four render machines without having to buy extra licenses for Mental Ray. That leaves us with budget of around $1250 for each render node.The most important component to consider while choosing parts for a rendering node is the CPU as this is the part that will be doing all of the work and we can choose other components based on this. We decided on Intel’s i7 4790k and the accompanying Z97chipset. This gives us rendering performance close to the lower end of Intel’s Haswell-E platform chips but at a much lower price point. As we are housing the render nodes in Headjam’s rack server, the physical size of the machines was also a significant concern while choosing parts. For this reason we chose a Mini-ITX form factor for the machines.

Hardware Selection

CPU

Intel Core i7 4790k - $460

This CPU was an easy choice while choosing parts for the render nodes. While only having 4 cores (8 virtual with hyperthreading) the 4790k makes up for this with a blistering 4 GHz stock clock speed and 4.4 GHz in turbo mode. With these higher clock speeds comes close to matching the performance of more expensive CPUs such as the six core 5820K but at a substantial cost saving, especially when you factor in compatible motherboard costs. The K in the name 4790K signifies that the CPU clock multiplier is unlocked allowing for overclocking with adequate cooling. Although Headjam will not be overclocking our CPUs in order to maintain system stability and reliability.

CPU Cooler

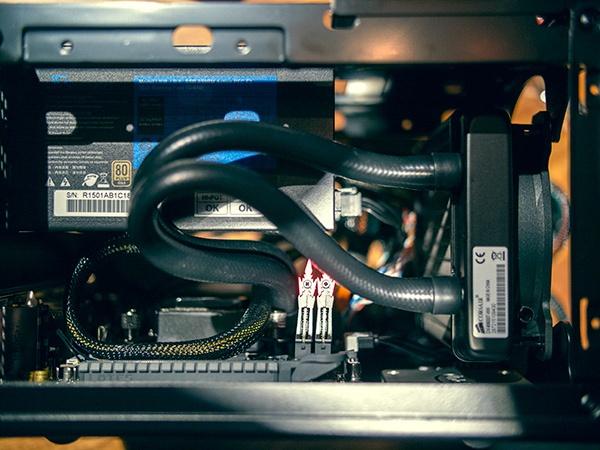

Corsair Hydro Series H60 SE 99$

While rendering, the CPU will be running at full capacity for prolonged periods of time. This can generate a lot of internal heat that can shorten the life of the CPU and hinder performance through thermal throttling. Intel includes an air cooling heatsink with the 4790K, but it’s loud and doesn’t provide enough cooling for our systems. So we’ve included an entry-level all-in-one liquid cooler in our build. The H60 will fit into our small case, provide adequate cooling for the CPU and provide airflow to other components in the system.

Motherboard

Asus Z971-PLUS Mini - $205

Being familiar with the intricacies of their BIOS interface, we chose to go with an ASUS motherboard. Asus is a respected name in computer hardware. It has the M-ITX form factor that we need for our limited space and comes with everything we need at a reasonable price.

Graphics

As these machines are just CPU slaves for the workstation running Maya, no dedicated GPU will be required at this stage. We are instead leveraging the GPU on the 4790K.

RAM

Gskill Trident X F3-2133C9D-16GTX 16GB (2x8GB) DDR3 -$195

Cheap fast-ish RAM. 3D rendering doesn’t usually utilise heaps of RAM, but with the ITX boards having only two slots, it was important to add enough to future-proof the systems.

Case - Silverstone SG05-Lite

This simple, cheap and tiny case allows us to place all four render nodes on one rackmount shelf while taking up no more space than a 4U rack mount case. There is room for a 120mm water cooling radiator and fan on the front intake of the case, and it also has room to add a two slot PCI-E card should it be required in the future.

PSU

Seasonic G-650 80Plus Gold 650W

Headjam have used Seasonic power supplies in previous custom machine builds. We have found them to be extremely reliable for our video production team’s mission-critical hardware. Being 80plus gold, the G-650 is about as efficient as they come, an important factor when considering power usage costs. 650 watts may seem like overkill for machines likely drawing fewer than 200 watts at full load, but it gives us the headroom to add power hungry cards in the vacant PCI-E slots in the future.

SSD - Intel 535 Series

The rendering nodes do not require large amounts of hard drive space. The nodes need just enough space for the operating system and the files necessary to render a specific frame of video. The 535 is a cheap, fast low capacity SSD that meets our requirements.

Operating System and render management

Network Setup

All the slaves have a 1Gbps to Headjam’s 10Gbps switch while the master has a 2Gbps link aggregated connection to the switch. This setup allows render assets to move quickly out to the slaves and alleviate some of the network bottlenecks which can occur when rendering large scenes over the network.

Benchmarks

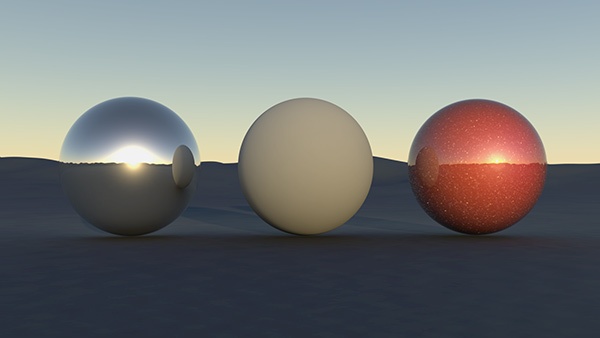

For benchmarking, we set up a simple scene, a plain landscape with some spheres with differing Mental Ray materials - some matte, some reflective. All the geometry is quite high resolution with a polycount of around four million polygons and the test frame was rendered at an image resolution of 1920x1080 pixels (see bellow). We dialed up the render quality settings to produce a compute-heavy render task that would take a considerable time to render. We rendered with the master machine alone, and with the slave machines. Here are the results.

Master 47:20 mins (Previous render times)

Slaves 15:21 mins (New render times)